Achieving high availability rests on having good health checks. HAProxy as an API gateway gives you several ways to do this.

Run your service on multiple servers. Place your servers behind a HAProxy load balancer. Enable health checking to quickly remove unresponsive servers.

These are all steps from a familiar and trusted playbook. Accomplishing them ensures that your applications stay highly availability. What you may not have considered, though, is the flexibility that HAProxy gives you in how you can monitor your servers for downtime. HAProxy offers more ways to track the health of your servers and react quicker than any other load balancer, API gateway, or appliance on the market.

In the previous two blog posts of this series, you were introduced to using HAProxy as an API gateway and implementing OAuth authorization. You’ve seen how placing HAProxy in front of your API services gives you a simplified interface for clients. It provides easy load balancing, rate limiting and security—combined into a centralized service.

In this blog post, you will learn several ways to create highly available services using HAProxy as an API gateway. You’ll get to know active and passive health checks, how to use an external agent, how to monitor queue length, and strategies for avoiding failure. The benefits of each health-checking method will become apparent, allowing you to make the best choice for your scenario.

Related Articles:

Using HAProxy as an API Gateway, Part 1 [Introduction]

Using HAProxy as an API Gateway, Part 2 [Authentication]

Using HAProxy as an API Gateway, Part 4 [Metrics]

Using HAProxy as an API Gateway, Part 5 [Monetization]

Using HAProxy as an API Gateway, Part 6 [Security]

Active health checks

The easiest way to check whether a server is up is with the server directive’s check parameter. This is known as an active health check. It means that HAProxy polls the server on a fixed interval by trying to make a TCP connection. If it can’t contact a server, the check fails and that server is removed from the load balancing rotation.

Consider the following example:

| backend apiservers | |

| balance roundrobin | |

| server server1 192.168.50.3:80 check |

When you’ve enabled active checking, you can then add other, optional parameters, such as to change the polling interval and/or the number of allowed failed checks:

Parameter | What it does |

| The interval between checks (defaults to milliseconds, but you can set seconds with the s suffix). |

| The interval between checks when the server is already in the down state. |

| The number of failed checks before marking the server as down. |

| The number of successful checks before marking a server as up again. |

| The interval between checks when up, but a check has failed; or the interval when down, but a check has passed. You are transitioning towards up or down. This allows you to speed up the interval. |

The next example demonstrates these parameters:

| server server1 192.168.50.3:80 check inter 5s downinter 5s fall 3 rise 3 |

If you’re load balancing web applications, then instead of monitoring a server based on whether you can make a TCP connection, you can send an HTTP request. Add option httpchk to a backend and HAProxy will continually send requests and expect to receive valid HTTP responses that have a status code in the 2xx to 3xx range.

| backend apiservers | |

| balance roundrobin | |

| option httpchk GET /check | |

| server server1 192.168.50.3:80 check |

The option httpchk directive also lets you choose the HTTP method (e.g. GET, HEAD, OPTIONS), as well as the URL to monitor. Having this flexibility means that you can dedicate a specific webpage to be the health-check endpoint. For example, if you’re using a tool like Prometheus, which exposes its metrics on a dedicated page within the application, you could target its URL. Or, you could point HAProxy at the homepage of your website, which is arguably the most important.

You can also set a different IP address and/or port to check by adding the addr and port parameters, respectively, to the server line. In the following snippet, we target port 80 for our health checks, even though normal web traffic is sent to port 443:

| option httpchk GET /check | |

| server server1 192.168.50.3:443 check port 80 |

Some web servers will reject requests that don’t include certain headers, such as a Host header. You can pass HTTP headers with the health check, like so:

| option httpchk GET /check HTTP/1.1\r\nHost:\ mywebsite.com | |

| server server1 192.168.50.3:80 check port 80 |

You can accept only specific responses from the server, such as a specific status code or string within the HTTP body. Use http-check expect with either the status or string keyword. In the following example, only health checks that return a 200 OK response are classified as successful:

| backend apiservers | |

| balance roundrobin | |

| option httpchk GET /check | |

| http-check expect status 200 | |

| server server1 192.168.50.3:80 check |

Or, require that the response body contain a certain case-sensitive string of text:

| http-check expect string success |

You can also have one server delegate its own status to another server by adding a track parameter to a server line. It should be set to the name of a backend and server. If the tracked server is down, the server tracking it will also be down.

Passive health checks

Active checks are easy to configure and provide a simple monitoring strategy. They work well in a lot of cases, but you may also wish to monitor real traffic for errors, which is known as passive health checking. In HAProxy, a passive health check is configured by adding an observe parameter to a server line. You can monitor at the TCP or HTTP layer. In the following example, the observe parameter is set to layer4 to watch real-time TCP connections.

| backend apiservers | |

| balance roundrobin | |

| option redispatch | |

| server server1 192.168.50.3:80 check inter 2m downinter 2m observe layer4 error-limit 10 on-error mark-down |

Here, we’re observing all TCP connections for problems. We’ve set error-limit to 10, which means that ten connections must fail before the on-error parameter is invoked and marks the server as down. When that happens, you’ll see a message like this in the HAProxy logs:

| Server apiservers/server1 is DOWN, reason: Health analyze, info: "Detected 10 consecutive errors, last one was: L4 unsuccessful connection". 1 active and 0 backup servers left. 0 sessions active, 0 requeued, 0 remaining in queue. |

The only way to revive the server is with the regular health checks. In this example, we’ve used inter to set the polling health check interval to two minutes. When they do run and mark the server as healthy again, you’ll see a message telling you that the server is back up:

| Server apiservers/server1 is UP, reason: Layer4 check passed, check duration: 0ms. 2 active and 0 backup servers online. 0 sessions requeued, 0 total in queue. |

It’s a good idea to include option redispatch so that if a client runs into an error connecting, they’ll instantly be redirected to another healthy server within that backend. That way, they’ll never know that there was an issue. You can also add a retries parameter to the server so that HAProxy retries it the given number of times. The delay between retries is set with timeout connect.

As an alternative to watching TCP traffic, you can monitor live HTTP requests by setting observe layer7. This will automatically remove servers if users experience HTTP errors.

| server server1 192.168.50.3:80 check inter 2m downinter 2m observe layer7 error-limit 10 on-error mark-down |

If any webpage returns a status code other than 100-499, 501 or 505, it will count towards the error limit. Be aware, however, that if enough users are getting errors on even a single, misconfigured webpage, it could cause the entire server to be removed. If that same page affects all of your servers, you may see widespread loss of service, even if the rest of the site is functioning normally. Note that the active health checks will still run and eventually bring the server back online, as long as the server is reachable.

Gauging health with an external agent

Connecting to a service’s IP and port or sending an HTTP request will give you a good idea about whether that application is functioning. One downside, though, is that it doesn’t give you a rich sense of the server’s state, such as its CPU load, free disk space, and network throughput.

With HAProxy, you can query an external agent, which is a piece of software running on the server that’s separate from the application you’re load balancing. Since the agent has full access to the remote server, it has the ability to check its vitals more closely.

External agents have an edge over other types of health checks: they can send signals back to HAProxy to force some kind of change in state. For example, they can mark the server as up or down, put it into maintenance mode, change the percentage of traffic flowing to it, or increase and decrease the maximum number of concurrent connections allowed. The agent will trigger your chosen action when some condition occurs, such as when CPU usage spikes or disk space runs low.

Consider this example:

| backend apiservers | |

| balance roundrobin | |

| server server1 192.168.50.3:80 check weight 100 agent-check agent-inter 5s agent-addr 192.168.50.3 agent-port 9999 |

The server directive’s agent-check parameter tells HAProxy to connect to an external agent. The agent-addr and agent-port parameters set the agent’s IP and port. The interval between checks is set with agent-inter. Note that this communication is not HTTP, but rather a raw TCP connection over which the agent communicates back to HAProxy by sending ASCII text over the wire.

Here are a few things that it might send back. Note that an end-of-line character (e.g. \n) is required after the message:

Text it sends back | Result |

down\n | server is put into the down state |

up\n | server is put into the up state |

maint\n | server is put into maintenance mode |

ready\n | server is taken out of maintenance mode |

50%\n | server’s |

maxconn:10\n | server’s |

The agent can be any custom process that can return a string whenever HAProxy connects to it. The following Go code creates a TCP server that listens on port 9999 and measures the current CPU idle time. If that metric falls below 10, the code sends back the string, 50%\n, setting the server’s weight in HAProxy to half of what it is currently.

| package main | |

| import ( | |

| "fmt" | |

| "time" | |

| "github.com/firstrow/tcp_server" | |

| "github.com/mackerelio/go-osstat/cpu" | |

| ) | |

| func main() { | |

| server := tcp_server.New(":9999") | |

| server.OnNewClient(func(c *tcp_server.Client) { | |

| fmt.Println("Client connected") | |

| cpuIdle, err := getIdleTime() | |

| if err != nil { | |

| fmt.Println(err) | |

| c.Close() | |

| return | |

| } | |

| if cpuIdle < 10 { | |

| // Set server weight to half | |

| c.Send("50%\n") | |

| } else { | |

| c.Send("100%\n") | |

| } | |

| c.Close() | |

| }) | |

| server.Listen() | |

| } | |

| func getIdleTime() (float64, error) { | |

| before, err := cpu.Get() | |

| if err != nil { | |

| return 0, err | |

| } | |

| time.Sleep(time.Duration(1) * time.Second) | |

| after, err := cpu.Get() | |

| if err != nil { | |

| return 0, err | |

| } | |

| total := float64(after.Total - before.Total) | |

| cpuIdle := float64(after.Idle-before.Idle) / total * 100 | |

| return cpuIdle, nil | |

| } |

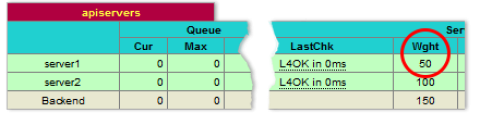

You can then artificially spike the CPU with a tool like stress. Use the HAProxy Stats page to see the effect on the load balancer. Here, the server’s weight began at 100, but is set to 50 when there is high CPU usage.

Look for the Weight column to assess the effects of your stress test

You can enable the Stats page by adding a listen section with a stats enable directive to your HAProxy configuration file. This will start the HAProxy Stats page on port 8404:

| listen stats | |

| bind *:8404 | |

| stats enable | |

| stats uri / | |

| stats refresh 5s |

Queue length as a health indicator

When you’re thinking about how to keep tabs on your servers’ health, you might wonder, what constitutes healthy anyway? Certainly, loss of connectivity and returning errors fall into the unhealthy category. Those are failed states. However, before it gets to that point, maybe there are warning signs that the server is on a downward spiral, moving towards failure.

One early warning sign is queue length. Queue length is the number of sessions in HAProxy that are waiting for the next available connection slot to a server. Whereas other load balancers may simply fail when there are no servers ready to receive the connection, HAProxy queues clients until a server becomes available.

Following best practices, you should have a maxconn specified on each server line. Without one, there’s no limit to the number of connections HAProxy can open. While that might seem like a good thing, servers have finite capacity. The maxconn setting caps it so that the server isn’t overloaded with work. The following configuration limits the number of concurrent connections to 30:

| backend apiservers | |

| balance roundrobin | |

| server server1 192.168.50.3:80 check maxconn 30 |

Under heavy load, or if the server is sluggish, the connections may exceed maxconn and will then be queued in HAProxy. If the queue length grows past a certain number or if sessions in the queue have a long wait time, it’s a sign that the server isn’t able to keep up with demand. Maybe it’s processing a slow database query or perhaps the server isn’t powerful enough to handle the volume of requests.

When queue length grows, clients begin to experience increasingly long wait times. With a bottleneck, downstream client applications may exhaust their worker threads, run into their own timeouts, or fail in unexpected ways. You can see why monitoring queue length is a good way to gauge the health of the system. So, what can you do when you see this happening?

Applying backpressure with a timeout

By default, each backend queue in HAProxy is unbounded and there’s no timeout on it whatsoever. However, it’s easy to add a limit with the timeout queue directive. This ensures that clients don’t stay queued for too long before receiving a 503 Service Unavailable error.

| backend apiservers | |

| balance leastconn | |

| timeout queue 5s | |

| server server1 192.168.50.3:80 check maxconn 30 |

You might think that sending back an error is bad news. If we didn’t queue at all, then I’d say that was true. However, under unusual circumstances, failure can be better than leaving clients waiting for a long time. Client applications can build logic around this. For example, they could retry after a few seconds, display a message to the user, or even scale out server resources if given access to the right APIs. Giving feedback to downstream components about the health of a server is known as backpressure. It’s a mechanism for letting the client react to early warning signs, such as by backing off.

If you do not set timeout queue, it defaults to the value of timeout connect. It can also be set in a defaults section.

Failing over to a remote load balancer

Another way to deal with a growing queue is to direct clients to another load balancer. Consider a scenario where you have geographically redundant data centers: one in North America and another in Europe. Ordinarily, you’d send clients to the data center nearest to them. However, if a backend has an avg_queue, which is the queue length of all servers within that backend divided by the number of servers, that grows past a certain point, you could send clients to the other data center’s load balancer.

Your North American load balancer would be configured like this:

| frontend api_gateway_northamerica | |

| bind :80 | |

| acl northamerica_toobusy avg_queue(northamerica) gt 20 | |

| acl europe_up srv_is_up(europe/server1) | |

| use_backend europe if northamerica_toobusy europe_up | |

| default_backend northamerica | |

| backend northamerica | |

| balance leastconn | |

| timeout queue 5s | |

| server server1 server1:80 check maxconn 30 | |

| backend europe | |

| option httpchk GET /check | |

| server server1 eu-api.mysite.com:80 check port 8080 |

In the frontend section, there are two ACLs. The first, northamerica_toobusy, checks if the average queue length of the northamerica backend is greater than 20. The second, europe_up, uses the srv_is_up fetch method to check whether the server in the europe backend is up.

The interesting thing about this is that the europe_up ACL is checking a remote address that’s in the European data center by pointing to a backend that contains the address of the European data center’s load balancer. This means that the North American load balancer is not checking the health of the European servers directly. Instead, it’s monitoring a URL that the European load balancer has provided. That’s because the North American load balancer doesn’t have any information about connections that it didn’t proxy and wouldn’t have the full picture of the state of those servers. Essentially, you’re avoiding the problem where each load balancer sends clients directly to the origin servers but without adequate knowledge of all of the connections in play, potentially swamping those servers with connections from multiple load balancers.

When the northamerica backend has too many sessions queued and the europe backend is up, clients get redirected to the Europe URL. It’s a way to offload work until things calm down. The European load balancer would be configured like this:

| frontend api_gateway_europe | |

| bind :80 | |

| default_backend europe | |

| frontend health_check | |

| bind :8080 | |

| monitor-uri /check | |

| acl europe_toobusy avg_queue(europe) gt 20 | |

| monitor fail if europe_toobusy | |

| backend europe | |

| balance leastconn | |

| timeout queue 5s | |

| server server1 server1:80 check maxconn 30 |

Here, you’re using the monitor-uri directive to intercept requests for /check on port 8080 and having HAProxy automatically send back a 200 OK response. However, if the europe backend becomes too busy, the monitor fail directive becomes true and begins sending back 503 Service Unavailable. In this way, the European data center can be monitored from the North American data center and requests will only be redirected there if it’s healthy.

Real-time Dashboard

HAProxy Enterprise combines the stable codebase of HAProxy with an advanced suite of add-ons, expert support and professional services. One of these add-ons is the Real-time Dashboard, which allows you to monitor the health of a cluster of load balancers.

The Real-time Dashboard is one of the advanced add-ons you get with HAProxy Enterprise

Out-of-the-box, HAProxy provides the Stats page, which gives you observability over a single load balancer instance. The Real-time Dashboard aggregate information across multiple load balancers for easier and faster administration. You can enable, disable, and drain connection from any of your servers and the list can be filtered by server name or by server status.

Conclusion

In the blog post, you learned various ways to health check your servers so that your APIs maintain a high level of reliability. This includes enabling active or passive health checks, communicating with an external agent, and using queue length as an early warning signal for failure.

HAProxy Enterprise offers expert support services and a variety of advanced security modules that are necessary in today’s threat landscape. Want to learn more? Sign up for a free trial or contact us. You can stay in the loop about topics like these by subscribing to this blog or following us on Twitter. You can also join the conversation on Slack.

Subscribe to our blog. Get the latest release updates, tutorials, and deep-dives from HAProxy experts.

![Using HAProxy as an API Gateway, Part 2 [Authentication]](https://cdn.haproxy.com/img/containers/partner_integrations/image2-e1548174430466.png/3942e9fa498bb46e325ba72db6f9bd84/image2-e1548174430466.png)

![Using HAProxy as an API gateway, part 1 [introduction]](https://cdn.haproxy.com/img/containers/partner_integrations/2022/api-gateway-pt1/avalanche-area-1080x540.png/2b915c11c726470bf6e314e194c3a7ed/avalanche-area-1080x540.png)

![Using HAProxy as an API Gateway, Part 5 [Monetization]](https://cdn.haproxy.com/img/containers/partner_integrations/api-gateway-monetization.png/f6ba7bc74399bb3dbeaec96f2813c2f7/api-gateway-monetization.png)

![Using HAProxy as an API Gateway, Part 6 [Security]](https://cdn.haproxy.com/img/containers/partner_integrations/api-gateway-security.png/809caaf409b9cbba5f53d22b31c00307/api-gateway-security.png)