Criteo’s Service Mesh with Consul & HAProxy Enterprise

Millions of requests per second at any point in time.

29 offices around the world with more than 2,800 employees.

Founded in 2013 and grew to be one of the largest ad agencies.

About Criteo

Criteo began within a start-up incubator in Paris and has grown to be a global leader in commerce marketing, with 29 offices around the world. It uses machine learning, big data, and the creativity of its 2,800 employees to serve billions of advertisements per year. Its services help companies grow brand awareness, develop targeted audiences, and get noticed.

Results at a Glance

The Challenge

When it comes to technology, Criteo is typically the first to arrive, not the last. Its engineers and architects use this savvy to select best-of-breed software, implement infrastructure automation, adopt container platforms, and, in general, host systems that are self-service and easy to use. The practical reasons driving these initiatives are to provide infrastructure that supports Criteo’s traffic (millions of requests per second) and to give the R&D department the ability to deploy quickly and try out new ideas, with as little friction as possible.

The software engineers had achieved vast scalability by designing their architecture using microservices. This approach allowed them to optimize at a granular level by increasing the number of nodes for any service, independent of the others. However, they wrestled with the problem of connecting their frontend web applications with their backend microservices. Initially, they used custom libraries to proxy messages between the layers, but this became difficult to maintain.

Criteo’s infrastructure in 2013, when the company starting picking up steam as one of the World’s largest advertisers

Next, they introduced a layer of HAProxy Enterprise load balancers between the applications and backend services, but this added extra latency. Criteo’s advertising platform depends on speed, where every millisecond counts. So, they needed a novel approach to keep latency at a minimum. They began looking for a way to connect their applications and services in a more seamless way.

The Objectives

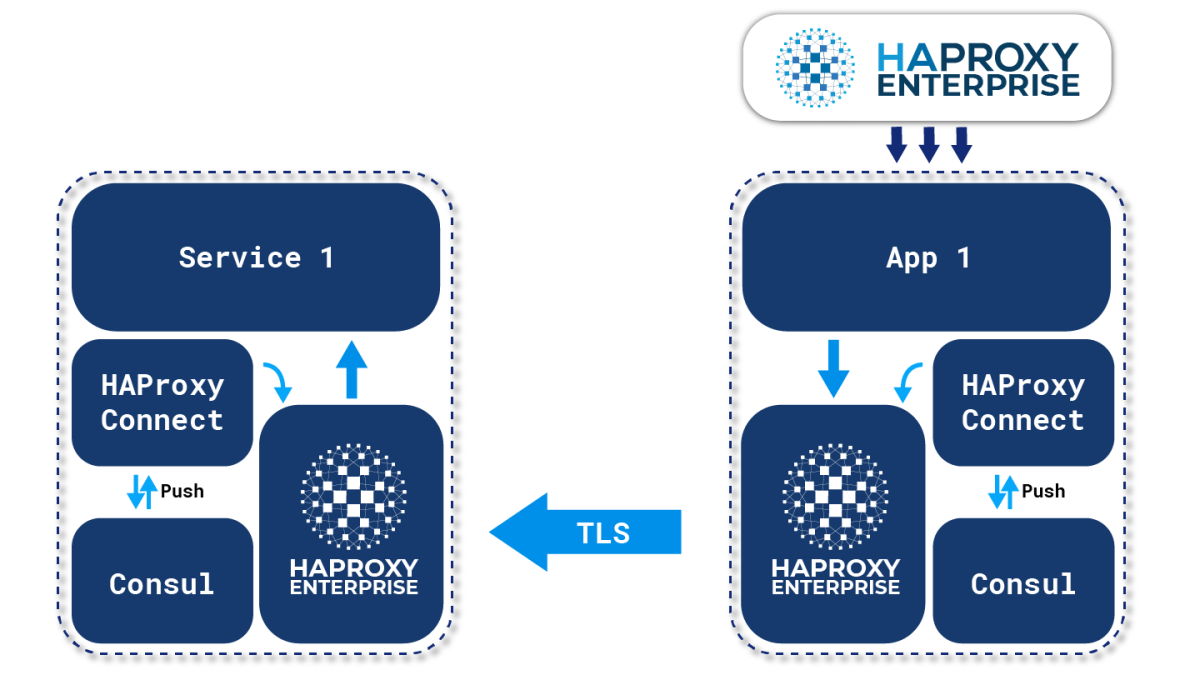

The Criteo engineers decided that the best way to solve the problem was to implement a service mesh. A service mesh places a proxy next to every microservice, which in Criteo’s case would replace their existing client-side libraries. Then, instead of routing traffic to an extra layer of load balancers, which would add a network hop, an application could speak to a microservice directly, relaying its messages through its local, sidecar proxy.

Criteo’s current solution consists of one microservice contacting another by leveraging HAProxy Enterprise’s Stream Processing Offload Protocol (SPOP)

They would use HashiCorp Consul to manage the configuration for all of those proxies. Consul would hold the routing rules and transmit them to each node. Whenever a new microservice came online, Consul would notify the entire fleet and all proxies would sync with the new information. The problem was that the default proxy that Consul provided, Envoy Proxy, was insufficient for their requirements.

The Solution

They had already replaced their F5 load balancer with HAProxy Enterprise, since it could handle millions of requests per second, provided excellent logs and metrics, and supported TLS offloading, rate limiting, and other features. They determined that it would fit the role of sidecar proxy perfectly—but it was not available as an option with Consul. So, they decided to build the integration between Consul and HAProxy Enterprise themselves.

Criteo engineers chose HAProxy Enterprise since it builds upon the open-source HAProxy with additional modules and provides expert technical support. The team then wrote custom code that gathers routing information from Consul and then applies it to HAProxy Enterprise. HAProxy Enterprise’s flexible configuration language made it possible, giving them access to all of the features they needed, but the HAProxy Data Plane API made it even easier. The Data Plane API allows you to configure HAProxy Enterprise using HTTP commands, so it can be set up programmatically.

Pierre during his HAProxyConf 2019 talk

Another challenge was solved: When one microservice contacts another, the load balancers between them need to decide whether to allow the communication. After all, some microservices should not be able to connect to others, for security reasons. Criteo leveraged HAProxy Enterprise’s unique Stream Processing Offload Protocol (SPOP) to run a program beside HAProxy Enterprise that does this check. The SPOP allows live traffic to be streamed to an external program where it can be inspected and processed in real-time as it flows through the load balancer. The team developed a module that validates the calling service’s credentials before allowing it to pass.

When the project was completed, HAProxy Enterprise became the central component of Criteo’s Consul-powered service mesh, routing traffic for roughly 270,000 service nodes. This uniformity gave them the features they needed, including TLS encryption between services and rate-limiting, while keeping latency extremely low. Criteo has since open-sourced their implementation and conferred stewardship of it to HAProxy Technologies.

The Results

Criteo gained better performance and security between their microservices by developing a service mesh that uses HAProxy Enterprise as the client-side load balancer. All traffic flowing through their network inherits TLS encryption, fast performance, and other HAProxy Enterprise features such as rate limiting and detailed observability. Today at Criteo, millions of requests per second flow through HAProxy Enterprise, allowing them to continue their journey towards easy, self-service infrastructure.

Interested to learn more about HAProxy use cases? Explore our Success Stories page.