Log Sampling is a powerful feature introduced in HAProxy 2.0 that lets you define a percentage of your logs to create a representative view of your data allowing you to minimize your costs.

Log files are the key to observability. They can provide helpful information that can be used for debugging as well as for analytics that can be used to understand how users interact with an application. Every login, every transaction, every search, and every click can be logged and these logs can form an intimate view of how a user navigates through your system.

At scale, though, comprehensive logs can become unmanageable quickly. Large amounts of log data are expensive to store and ship. For example, if you are serving 500,000 requests per second, you will find yourself having to deal with over five terabytes of logs each and every day:

At 500,000 requests per second with about 125 bytes logged per request, you will generate 62.5 MB of logs every second. With 86,400 seconds in a day, that works out to 5.4 terabytes per day, which is over 1.9 petabytes per year.

That’s a lot of data.

HAProxy’s log sample feature lets you define what proportion of your logs you want to keep and process, using a directive introduced in version 2.0. Taking this sample locally in your load balancer, as opposed to taking the sample after it’s sent to a remote syslog server, will minimize the amount of data that you ship and process.

The cost-saving benefits of sampling

Do you need all of your log data to understand your application?

Perhaps not.

We’re going to take a look at using some of the same methods that statisticians use in order to use less storage, save money, and reduce processing time without sacrificing the insight we can derive from our log data. How?

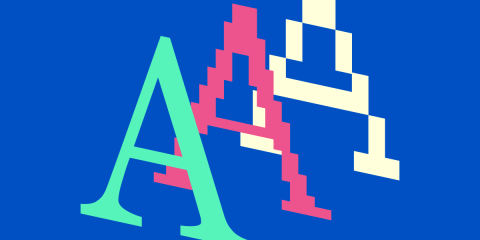

Consider the image below.

Mona Lisa downsampled

In it, I’ve cropped a photo of DaVinci’s Mona Lisa from Wikipedia. In the second frame, I’ve used an algorithm to discard about half of the data. It’s still recognizable, of course, but less aesthetically pleasing.

Each successive frame downsamples it more, (1:1, 1:2, 1:10, 1:25,) until, in the last frame, 1/25 resolution, the resultant image is barely recognizable as the original painting.

Let’s look at another example.

SINE wave at different resolutions

If you’re familiar with digital audio, you probably know that a higher sample rate in a sound file translates to better sound. CD audio is sampled at 44.1 kHz (44,100 samples per second) while high-resolution audio might be sampled as high as 192 kHz. At the other end of the spectrum, sampling at 8 kHz will give you a much lower quality audio file, not nearly as pleasing to listen to, but one that might be suitable for applications that don’t require high fidelity, where the content of the message that’s recorded is more important than how it sounds.

The bandwidth and storage requirements for the 8 KHz file are, of course, considerably reduced.

Log files are much the same. To get a complete picture of your application’s traffic, you’ll need to capture every logline. But to get a less-than-perfect view, but one that still represents your traffic accurately, take a sample of your logs.

How well does a 50% sample of your logs represent your traffic, compared to 100%? Probably pretty well. Likewise, you may find that when you plot a graph of one of the fields captured by HAProxy, 25% of your logs follow the same curve as your 100% curve, but at 10%, you lose the ability to discern valuable insight about your application.

Look again at each of the frames in our Mona Lisa image. In the last frame, at 1:25 sampling (4% of the original data), you can recognize that it represents a person, probably that it’s the Mona Lisa, but her enigmatic smile is gone. At 10%, though, it’s still there. Somewhere between 1:10 and 1:25 is the lower bound for this very nuanced and subjective measurement.

Log sampling in HAProxy

Let’s take a look at an example log directive in HAProxy that uses log sampling to capture one log line out of every ten:

| global | |

| log 127.0.0.1:514 sample 1:10 local0 info |

In this example, 127.0.0.1:514 is the syslog server on localhost (514 being the standard syslog port) and sample 1:10 is our sample frequency, meaning that one out of every ten log lines is forwarded to syslog. The local0 field is the log facility and info is the severity level.

Choosing a sample frequency

There is no magic formula for determining the ideal sample frequency. To explore which sampling frequency will work for you, you will need to first establish a baseline, most likely by taking a look at un-sampled log data, that is to say, 100% of your logs.

| log 127.0.0.1:514 sample 1:1 local0 info |

Next, try sampling at 50%:

| log 127.0.0.1:514 sample 1:2 local0 info |

If you like, you can use different syslog servers and try both concurrently:

| # Local Syslog: | |

| log 127.0.0.1:514 sample 1:1 local0 info | |

| # Another Syslog: | |

| log 192.168.1.100:514 sample 1:2 local0 info |

You’re not limited to a simple ratio such as 1:10, either. The following is valid:

| log 127.0.0.1:514 sample 2-3,8-11,46,67-83:100 local0 info |

Out of 100 requests, this would capture logs for requests 2 and 3, then 8 through 11, then 43, and finally 67 through 83. This would be relevant if we are interested in exploring dependencies and progression between consecutive events.

Can you still answer crucial questions if you have only 50% of your data? 25% 10%? How low can you go and get the same answers to these questions? It doesn’t need to be a “set it and forget it” proposition. The ideal sample frequency can change if the nature of your traffic changes substantially. For example, if your app experiences anomalous traffic at certain times or during different campaigns, you may need to adjust the sampling frequencies you employ. A sample frequency that worked well last Friday may not work well on Black Friday.

The key may lie in how diverse the traffic is that you load balance: The more heterogeneous a data population is, the larger the sample needs to be. Your own results will need to be tuned and checked and re-tuned in different situations, but fortunately, HAProxy makes doing this a simple process.

It will prove helpful to have a visualization tool such as Grafana or Elastic Stack to help you see how each successive change affects the graphs produced.

The lost log problem

Some of you may wonder whether log sampling will store the right logs. If you need to troubleshoot a request, will the log be there? The approach you should take is to always capture requests that resulted in an error. Remember, you can use syslog severity levels to toggle the amount of data you log. Logs with the severity of Error and above could be sent to one syslog address, while log sampled data—meant for getting a sense of trends happening in your data, or seeing the forest, not the trees—could be sent to another. Or, you can send them to the same address, but use different facility codes.

| # Sampled logs: | |

| log 192.168.1.100:514 sample 1:10 local0 info | |

| # Error logs: | |

| log 192.168.1.101:514 local0 err |

You can even promote interesting requests to error status to make sure you capture them:

| frontend www | |

| bind :80 | |

| acl failed_request status 400 401 403 404 405 408 429 500 503 | |

| http-response set-log-level err if failed_request |

The important distinction is that you often don’t need 100% of logs to see trends, but you should capture logs for requests that you’ll likely want to inspect.

Conclusion

Log sampling in HAProxy is a straightforward yet sophisticated tool to help you maximize your log analysis capabilities while helping to minimize your expenditures. If you leverage this feature well, you will have the most economical but still accurate view of your systems.

Want to stay up to date on similar topics? Subscribe to this blog! You can also follow us on Twitter and join the conversation on Slack.

Interested in advanced security and administrative features? HAProxy Enterprise is the world’s fastest and most widely used software load balancer. It powers modern application delivery at any scale and in any environment, providing the utmost performance, observability, and security. Organizations harness its cutting-edge features and enterprise suite of add-ons backed by authoritative expert support and professional services. Ready to learn more? Sign up for a free trial.

Subscribe to our blog. Get the latest release updates, tutorials, and deep-dives from HAProxy experts.