HAProxy 3.1 is now the latest version. Learn more

Register for our on demand webinar “HAProxy 2.3 Feature Roundup” with Daniel Corbett, Director of Product and Marketing at HAProxy Technologies. Baptiste Assmann hosted this webinar in French, which is also available to watch on demand.

HAProxy 2.3 adds exciting features such as forwarding, prioritizing, and translating of messages sent over the Syslog Protocol on both UDP and TCP, an OpenTracing SPOA, Stats Contexts, SSL/TLS enhancements, an improved cache, and changes in the connection layer that lay the foundation for support for HTTP/3 / QUIC.

This release was truly a community effort and could not have been made possible without all of the hard work from everyone involved in active discussions on the mailing list and the HAProxy project GitHub.

The HAProxy community provides code submissions covering new functionality and bug fixes, documentation improvements, quality assurance testing, continuous integration environments, bug reports, and much more. Everyone has done their part to make this release possible! If you’d like to join this amazing community, you can find it on GitHub, Slack, Discourse, and the HAProxy mailing list.

In this post, we’ll give you an overview of the following updates included in this release:

Connection Improvements

Syslog Protocol (UDP/TCP)

Load Balancing

Cache

SSL/TLS Enhancements

Observability

OpenTracing (SPOE)

HTTP Request Actions

New Sample Fetches & Converters

Lua

Build

Testing

Deprecated and Removed Directives

Miscellaneous

Contributors

Connection Improvements

Contributors have been preparing HAProxy for QUIC and HTTP/3. There’s been quite a bit of low-level work. The connection layer was optimized to reduce the number of syscalls, and several debugging entries were added to help us better spot anomalies. Listeners have been reworked, and related structures have been reorganized to better suit the new design. File descriptors are no longer manipulated by the listener layer, and everything needed to register a QUIC protocol layer based on UDP should be in place.

Apart from changes targeting HTTP/3, HAProxy now gives you more control over the Linux TCP keepalive feature. TCP keepalive lets the Linux kernel know when a peer on the other end of a connection has stopped responding and that it’s safe to close the idle connection. It discovers this by sending probes. If the peer doesn’t reply, the socket is closed automatically.

In past versions of HAProxy, you could enable TCP keepalive but could not change the number of probes to send, the interval at which to send them, or how long to wait before starting to send probes. In some use cases, the default TCP keepalive settings may seem too long, and adjusting these values on a system basis through sysctl parameters tcp_keepalive_time, tcp_keepalive_intvl, and tcp_keepalive_probes affects all processes on the server, which may be undesirable. This release brings support for setting those parameters on both the client and server-side through the following parameters:

Option | Description |

| Sets the maximum number of keepalive probes TCP should send before dropping the connection on the client side. |

| Sets the time the connection needs to remain idle before TCP starts sending keepalive probes on the client side if enabled. |

| Sets the time between individual keepalive probes on the client side. |

| Sets the maximum number of keepalive probes TCP should send before dropping the connection on the server side. |

| Sets the time the connection needs to remain idle before TCP starts sending keepalive probes on the server side if enabled. |

| Sets the time between individual keepalive probes on the server side. |

Syslog Protocol (UDP/TCP)

Historically, HAProxy has had the capability to load balance syslog servers using mode tcp. It’s able to do this with just about any protocol that’s transported over TCP, but because the proxying happens at layer 4, once the connection is established, you lose inspection of the stream.

HAProxy 2.3 allows you to create a syslog listener over UDP or TCP that can forward, prioritize, and translate syslog messages to a pool of UDP or TCP syslog servers. It does this by introducing a new section called log-forward that can bind on TCP using the bind keyword and on UDP using dgram-bind for both IPv4 and IPv6. When combined with the log sampling feature added in HAProxy 2.0, you get granular control over how your syslog messages are forwarded. In fact, the log sampling feature achieves a form of round-robin load balancing between backend syslog servers. Just know that HAProxy does not support health checking of these servers yet, and cannot failover if a server becomes unavailable. In the following example, HAProxy samples the incoming log messages and load balances them across the four UDP syslog servers.

| log-forward syslog-lb | |

| bind :::7514 # Listen on TCP IPv4/IPv6 | |

| dgram-bind :::7514 # Listen on UDP IPv4/IPv6 | |

| # load balance messages on 4 udp syslog servers | |

| log 10.1.0.2:10001 format rfc5424 sample 1:4 local0 info | |

| log 10.1.0.3:10002 format rfc5424 sample 2:4 local0 info | |

| log 10.1.0.4:10003 format rfc5424 sample 3:4 local0 info | |

| log 10.1.0.5:10004 format rfc5424 sample 4:4 local0 info |

You can now also translate messages from one format to another. In the above example, all syslog messages received will be translated to the RFC 5424 format, regardless of the syslog format in which they were received.

The Runtime API show info command also exposes a new counter called CumRecvLogs, which provides a global count of received syslog messages.

Load Balancing

The balance directive sets the load balancing algorithm HAProxy will use to balance traffic across servers. Common values include roundrobin, leastconn, and hash-based algorithms like source and uri. When you set it as balance uri, HAProxy selects a server based on a hash of the URI path, allowing all future requests for the same path to go to the same server, which is advantageous when load balancing caches since it increases your cache hit ratio.

Some users were reporting inconsistencies in the balance uri directive between requests received over HTTP/1 and the same ones received over HTTP/2. HTTP/2 transports URIs differently than its predecessor. Whereas HTTP/1 puts the domain information in the Host header but keeps the URI path in the request line, HTTP/2 puts everything into headers. HAProxy assembles the information, but a 1-to-1 comparison has its challenges and one of those challenges ended up being how to create uniform hashes of URIs when incoming requests use a mix of these protocols. A new option for balance uri was introduced, path-only, that indicates that the hash should be calculated using only the path, normalizing HTTP/1 and HTTP/2 messages.

The balance random algorithm has also been improved. If it returns a server whose maxconn value has been reached, meaning that connections are now queuing up for that server, it will add the request to the backend’s queue and not the server’s queue. This means that the request can be redispatched to another available server, and typically the fastest.

It was also found that for some load balancing algorithms (roundrobin, static-rr, leastconn, first), if a request had been queued in the backend it was due to a previous attempt at finding a suitable server after trying all of them. The engineers optimized this so that if this condition is met, the next request will skip the part where each server is tried and instead go directly to the backend’s queue. In a test environment, this change increased the request rate from 110k to 148k RPS for 200 saturated servers on 8 threads.

The leastconn algorithm has been improved to take the queue length into account when dispatching requests. This means that if a server has a lot of queued requests we won’t hammer it with extra connections.

Cache

HAProxy introduced a small object cache in version 1.8 that allows caching of objects up to tune.bufsize. Its purpose is to offload the fetching of small files from the application servers, such as favicon.ico and Javascript or CSS files, although it also lends itself well to caching dynamic content for a short duration such as news announcements, message boards and user reviews. Version 1.9 expanded on this idea by increasing the maximum object size to 2GB, which can be defined with max-object-size, and increasing the total cache size to 4GB, which can be defined using total-max-size.

HAProxy 2.3 continues to build on this and adds support for the Expires header, which instructs HAProxy how long it should cache the response. HAProxy already supported the Cache-Control header. The cache also now supports the ETag, If-None-Match, and If-Modified-Since headers and can return an HTTP status code 304 instead of the full object. HAProxy will now also reject any configuration that has a duplicate cache section name.

Two new fetch methods were added—res.cache_hit and res.cache_name—which tells you whether a response came from the cache and, if so, the name of the cache used. You could reference these to set HTTP response headers that indicate a cache hit status, for example.

SSL/TLS Enhancements

Before HAProxy 2.2, an SSL certificate and its associated key had to be combined into a single PEM-formatted file before HAProxy could load it. HAProxy 2.2 added support for loading SSL/TLS certificates separately from the certificate key through the ssl-load-extra-files global directive. However, it required that the key be named the exact same as the certificate with “.key” appended to it. That meant that if the certificate was named mycert.crt the key had to be mycert.crt.key. Several users reported on GitHub that this was a bit inconvenient as they would prefer if it was mycert.key. A new global directive ssl-load-extra-del-ext instructs HAProxy to remove the extension before adding a new one. This requires your certificate files to have the “.crt” extension and is not compatible with bundle extensions (.ecdsa, .rsa, or .dsa).

A very powerful, but infrequently used, the feature of HAProxy is generate-certificates, which allows HAProxy to dynamically generate SSL certificates when provided with a CA certificate and its private key. In this version, it adds a Subject Alternative Name (SAN) to all generated certificates, which is a requirement in modern browsers. It now also supports chaining CAs and attaching a trust chain in addition to the generated certificate. The chain is loaded from the one provided in the ca-sign-file PEM file.

HAProxy can encrypt connections to backend servers using TLS and also populate the hostname sent in the TLS SNI extension via the sni field on the server line. It has the ability to reuse connections to backend servers, but when a connection is made to a server over TLS with SNI it’s marked as private and not shared, since it runs the risk that the expected SNI would not match. The community found that in many situations the SNI is hardcoded on the server line using, as an example, sni str(example.local) and, in this case, there’s no risk in reusing the connection. This release allows reusing connections that hardcode the SNI to the backend server. It will mark connections as private only if you’ve configured a variable expression for the SNI.

A change now helps to avoid accidentally loading an incorrect certificate. If a crt-list does not end with a new line a warning will be emitted indicating that the file might have been truncated.

Observability

HAProxy gives you best-in-class observability into the traffic that passes through it. It gives you statistics on the health of servers. It measures error rates, tracks queue lengths, and counts connections. 100 measurements display on the HAProxy Stats page or are fetched by the Runtime API’s show stat command. Yet, there’s much more data available that could be included. The goal is to find a way to add metrics in a modular way so that you see only data that’s relevant to your use case. For example, some people leverage HAProxy’s DNS resolver capabilities, while others don’t.

The show stat Runtime API command has a new option domain, which allows you to change the context of these statistics. By setting domain to proxy, which is the default value, you get the core proxy statistics that were available before. By setting it to, you get statistics related to DNS resolution that HAProxy performs, pertinent when you’ve added a resolvers field to a server line to resolve server addresses using DNS.

Here’s an example that displays DNS resolution statistics:

| $ echo "show stat domain dns" |socat tcp-connect:127.0.0.1:9999 - | |

| # id, send_error, valid, update, cname, cname_error, any_err, nx, timeout, refused, other, invalid, too_big, truncated, outdated, | |

| nameserver1, 0, 20, 0, 0, 10, 10, 0, 0, 0, 0, 0, 0, 0, 0, |

Future versions of HAProxy may extend this capability so that you’ll be able to switch contexts to see statistics for peers, SPOE agents, Lua modules, etc.

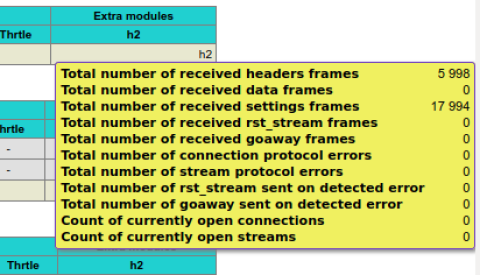

You can also enable extra statistics related to HTTP/2 on the HAProxy Stats page by using stats show-modules within your configuration:

| frontend stats | |

| bind :8404 | |

| stats enable | |

| stats uri / | |

| stats refresh 10s | |

| stats show-modules | |

| no log |

Here is the result when your proxy is configured for HTTP/2:

HTTP/2 stats

The Stats page displays a new field under the Wght column, which previously only showed the live or effective weight. In some cases, such as when using slowstart, it can be convenient to know what the effective weight is in comparison to the configured weight. The Wght column now contains the effective weight separated with a “/” followed by the configured weight.

The show stat Runtime API command now allows you to use show stat up to filter on servers that are up or show stat no-maint to show those that are not in maintenance mode. This is helpful when using DNS for Service Discovery with the server-template directive to filter out servers that have not been assigned addresses through service discovery, since those servers are put into maintenance mode automatically. It also shows a ratio of how many servers are seen as healthy. For example, seeing “UP (7/10)” tells you that there are seven healthy servers, but three are down.

The Prometheus exporter received some new process and per-server metrics, as outlined here:

Metric | Description |

| The total number of failed DNS resolutions. |

| The total number of bytes emitted. |

| The total number of bytes emitted through a kernel pipe. |

| The number of bytes emitted over the last elapsed second. |

| The current number of unsafe idle connections. |

| The current number of safe idle connections. |

| The current number of connections in use. |

| The estimated needed number of connections. |

A typo has also been fixed for the haproxyprocessfrontendsslreuse metric.

This release lays the foundation for extending the default statistics and allows various HAProxy components, such as the HTTP/2 mux, to register their own counters. The Runtime API’s show stat output has been extended and adds a new delimiter, a dash (“-“), after which additional dynamic fields can be added. They’re referred to as dynamic because they won’t be shown unless the relevant component is in use. For example, you will see additional counters pertinent to HTTP/2, but only if you’ve configured a listener that uses that protocol. Simple monitoring systems that look at the core stats—whose comma-separated fields are documented in the HAProxy Management Guide—can stop when they encounter the dash and intelligent systems that can match a column according to its key can get everything after the dash.

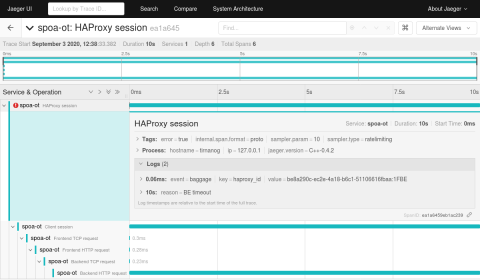

OpenTracing (SPOE)

Distributed tracing, according to opentracing.io is “a method used to profile and monitor applications, especially those built using a microservices architecture. Distributed tracing helps pinpoint where failures occur and what causes poor performance”. OpenTracing provides an API specification that allows developers to add instrumentation to their application code.

The HAProxy Stream Processing Offload Engine filter provides polyglot extensibility and enables you to extend HAProxy in any language without modifying its core codebase. In conjunction with the HAProxy 2.3 release, we’re proud to announce an OpenTracing SPOA that allows HAProxy to send data directly to distributed tracing systems via the OpenTracing API. This is the first release and we’re actively working to improve it. Stay tuned for HAProxy 2.4.

You can find the GitHub repo with the information to get started available here: https://github.com/haproxytech/spoa-opentracing.

An OpenTracing HAProxy trace

A trace showing an error

HTTP Request Actions

HAProxy’s HTTP actions allow you to accept, deny and modify a request; They provide access control, header manipulation, path rewrites, redirects, and more. This release introduces two new HTTP request actions. The first, http-request replace-pathq, does the same as http-request replace-path, except that the replacement value may contain a modified query string.

Consider this example of the old http-request replace-path directive. Although it defines a regular expression with two capture groups—the first group captures the URL path and the second captures the query string—you can not insert the second capture group using \2 into the replacement value. The function will simply ignore it and insert the original query string.

| # The old replace-path directive only replaces the path | |

| # Add /foo to the end of the path | |

| # /a/b/c?x=y --> /a/b/c/foo?x=y | |

| http-request replace-path ([^?]+)(\?{1}[^?]+)? \1/foo |

In contrast, you can capture the path and the query string using http-request replace-pathq and then either strip off the query string or insert it back, possibly with additional query parameters, as shown:

| # Strip off the query string | |

| # /a/b/c?x=y --> /a/b/c/foo | |

| http-request replace-pathq ([^?]+)(\?{1}[^?]+)? \1/foo | |

| # Add x=y to the query string | |

| # /a/b/c?x=y --> /a/b/c/foo?x=y&foo=bar | |

| http-request replace-pathq ([^?]+)(\?{1}[^?]+)? \1/foo\2&foo=bar |

The second new HTTP action is http-request set-pathq, which works similarly to http-request set-path, except that the query string is also rewritten. Unlike http-request replace-pathq, it does not take a regular expression and replacement value, but a formatted string to use as the new path. It can also be used to remove the query string, including the question mark. Here’s an example:

| # The old set-path directive | |

| # Prepend /foo to the path | |

| # /a/b/c?x=y --> /foo/a/b/c?x=y | |

| http-request set-path /foo%[path] | |

| # The new set-pathq directive | |

| # Strip off the query string | |

| # /a/b/c?x=y --> /foo/a/b/c | |

| http-request set-pathq /foo%[path] |

New Sample Fetches & Converters

This table lists fetches that are new in HAProxy 2.3:

Name | Description |

| This extracts the request’s URL path with the query string, which starts at the first slash. |

| Returns the boolean “true” value if the response has been built out of an HTTP cache entry, otherwise returns boolean “false”. |

| Returns a string containing the name of the HTTP cache that was used to build the HTTP response if res.cache_hit is true, otherwise returns an empty string. |

| Returns an integer corresponding to the server’s initial weight. If <backend> is omitted, then the server is looked up in the current backend. |

| Returns an integer corresponding to the current (or effective) server’s weight. If <backend> is omitted, then the server is looked up in the current backend. |

| Returns an integer corresponding to the current (or effective) server’s weight. If <backend> is omitted, then the server is looked up in the current backend. |

| Returns the DER formatted chain certificate presented by the client when the incoming connection was made over an SSL/TLS transport layer. When used for an ACL, the value(s) to match against can be passed in hexadecimal form. |

| Returns the DER formatted chain certificate presented by the server when the outgoing connection was made over an SSL/TLS transport layer. When used for an ACL, the value(s) to match against can be passed in hexadecimal form. |

The following converters have been added:

Name | Description |

iif | Returns the <true> string if the input value is true. Returns the <false> string otherwise. |

The new iif converter, which is an abbreviation for “immediate if“, acts as a ternary operator and will allow simplifying of many configurations, such as those that set the X-Forwarded-Proto header to http or https, as shown:

| http-request set-header x-forwarded-proto %[ssl_fc,iif(https,http)] |

It can also be combined nicely with the new res.cache_hit fetch:

| http-after-response set-header x-cache %[res.cache_hit,iif(Hit,Miss)] |

Lua

Lua is a lightweight, high-level programming language designed primarily for embedded use in applications. HAProxy introduced support for Lua 5.3 in HAProxy 1.6 and it brought a tremendous amount of flexibility when it comes to extending HAProxy. This release adds support for Lua 5.4, which was initially released in June 2020.

If you are upgrading from Lua 5.3, you will want to refer to the list of incompatibilities between 5.4 and 5.3. This release also exports the sample fetches http_auth() and http_auth_group(). You can now use regular expressions in fetches and converter arguments. This means that the regsub() converter is available. However, map converters based on regular expressions are not available because the map arguments are not supported. Finally, sample fetches and converters that require arguments are now supported as well.

Build

The following build changes were added:

DragonFly BSD was added as a build target.

Support for

accept4()andgetaddrinfo()was added to NetBSD.Support for

accept4(),closefrom(), andgetaddrinfo()was added for FreeBSD and the supported version was bumped to FreeBSD 10 and above.Support for threads,

accept4(),closefrom(), andgetaddrinfo()was added for OpenBSD and the supported version was bumped to OpenBSD 6.3 and above.Support for

getaddrinfo()was added to OS X.Support for

closefrom()in Solaris was added and the supported version was bumped to Solaris 10 and above.Support for the TCC compiler has been added.

An elegant solution using SSL_READ_EARLY_DATA_SUCCESS was added for checking for OpenSSL early data to address cases where BoringSSL was impersonating OpenSSL 1.1.1 but does not feature OpenSSL specific early data support.

Testing

Integration with the Varnish test suite was released with HAProxy 1.9 and aids in detecting regressions. The number of regression tests has grown significantly since then. This release adds seven new regression tests, which brings the total number to 101.

Deprecated and Removed Directives

The obsolete keyword

monitor-netwas removed. It supported only a single IPv4 network, was incompatible with SSL, and required HTTP/1.x. It is now recommended to usehttp-request return status 200 if { src 10.1.1.3 }instead.The obsolete keyword

mode healthwas removed. It was incompatible with SSL and worked with only HTTP/1. It is now recommended to usehttp-request return status 200instead.The global keyword

debughas been removed. It had, on occasion, trapped users by disrupting their system’s ability to boot. You can continue to use -d on the command line.The

nbprocdirective is now deprecated and is set for removal in 2.5. It used too much memory, led to high network overhead (poor reuse, multiple health checks), lacked peer syncing and stats, caused problems with seamless reloads, and would not support QUIC at all. Ifnbprocis found with more than one process whilenbthreadis not set, a warning will be emitted encouraging you to remove it or migrate tonbthread.The

gracedirective has been marked as deprecated and is scheduled tentatively for removal in 2.4 with a hard deadline of 2.5. It was meant to postpone the stopping of a process during a soft-stop but is incompatible with soft reloading. We suspect that it is not widely used, but the warning will help us to know if some specific uses remain.

Miscellaneous

The

strict-limitsdirective now defaults to on so that you’ll now get a startup error if you configure too large amaxconnfor your system’s limits.The process no longer reports “proxy foo has started”.

An optimization for PCRE2 was made, which uses the JIT match when a JIT optimization has occurred. This should shorten the code path to call the match function.

Several

deinit()fixes were made to improve the results from Valgrind.Support for upgradable locks was added. These cut the scheduler overhead in half and reduce the locking time during map and ACL updates.

Contributors

We would like to thank each and every contributor who was involved in this release. Contributors help in various forms such as discussing design choices, testing development releases, reporting detailed bugs, helping users on Discourse and the mailing list, managing issue trackers and CI, classifying Coverity reports, maintaining the documentation, operating some of the infrastructure components used by the project, reviewing patches, and contributing code.

The following list doesn’t do justice to all of the amazing people who offer their time to the project, but we wanted to give a special shout-out to individuals who have contributed code, and their area of contribution.

Contributor | AREA |

Baptiste Assmann | BUG FIX CLEANUP |

Emeric Brun | BUG FIX DOCUMENTATION NEW FEATURE |

David Carlier | OPTIMIZATION |

Pierre Cheynier | CLEANUP DOCUMENTATION |

Matteo Contrini | DOCUMENTATION |

Daniel Corbett | CONTRIB DOCUMENTATION |

Gilchrist Dadaglo | BUG FIX |

William Dauchy | BUG FIX CLEANUP DOCUMENTATION NEW FEATURE |

Amaury Denoyelle | BUG FIX DOCUMENTATION NEW FEATURE REORGANIZATION |

Tim Düsterhus | BUG FIX CLEANUP DOCUMENTATION NEW FEATURE |

Christopher Faulet | BUG FIX CLEANUP DOCUMENTATION NEW FEATURE REGTESTS/CI |

Thierry Fournier | NEW FEATURE |

Shimi Gersner | NEW FEATURE |

Sébastien Gross | DOCUMENTATION |

Emmanuel Hocdet | BUG FIX |

Olivier Houchard | BUG FIX |

Bertrand Jacquin | NEW FEATURE |

Harris Kaufmann | BUG FIX |

Victor Kislov | BUG FIX |

William Lallemand | BUG FIX BUILD CLEANUP DOCUMENTATION NEW FEATURE REGTESTS/CI |

Frédéric Lécaille | BUG FIX NEW FEATURE |

Jérôme Magnin | BUG FIX DOCUMENTATION |

Eric Salama | BUG FIX |

Ilya Shipitsin | BUG FIX BUILD CLEANUP REGTESTS/CI |

Baruch Siach | BUILD |

Brad Smith | BUILD DOCUMENTATION |

MIZUTA Takeshi | DOCUMENTATION NEW FEATURE |

Jackie Tapia | DOCUMENTATION |

Willy Tarreau | BUG FIX BUILD CLEANUP CONTRIB DOCUMENTATION NEW FEATURE OPTIMIZATION REGTESTS/CI REORGANIZATION |

Lukas Tribus | DOCUMENTATION |

Remi Tricot-Le Breton | BUG FIX NEW FEATURE REGTESTS/CI |

Miroslav Zagorac | BUILD |

zurikus | NEW FEATURE |