The below information is deprecated as HAProxy Enterprise now offers a fully functional native WAF module that supports whitelist-based rulesets, blacklist-based rulesets, and support for ModSecurity rulesets!

Synopsis

I’ve already described WAF in a previous article, where I spoke about WAF scalability with Apache and ModSecurity.

One of the main issues with Apache and ModSecurity is performance. To address this issue, an alternative exists: naxsi, a Web Application Firewall module for nginx.

So using Naxsi and HAProxy as a load balancer, we’re able to build a platform that meets the following requirements:

Web Application Firewall: achieved by Apache and ModSecurity

High-availability: application server and WAF monitoring, achieved by HAProxy

Scalability: ability to adapt capacity to the upcoming volume of traffic, achieved by HAProxy

DDOS protection: blind and brutal attacks protection, slowloris protection, achieved by HAProxy

Content-Switching: ability to route only dynamic requests to the WAF, achieved by HAProxy

Reliability: ability to detect capacity overusage, this is achieved by HAProxy

Performance: deliver response as fast as possible, achieved by the whole platform

The picture below provides a better overview:

The LAB platform is composed by 6 boxes:

2 ALOHA load balancers (could be replaced by HAProxy 1.5-dev)

2 WAF servers: CentOS 6.0, nginx and Naxsi

2 Web servers: Debian + apache + PHP + dokuwiki

Nginx and Naxsi installation on CentOS 6

Purpose of this article is not to provide such procedue. So please read this wiki article which summarizes how to install nginx and naxsi on CentOS 6.0.

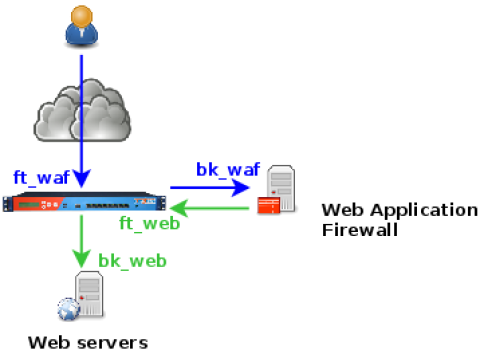

Diagram

The diagram below shows the platform with HAProxy frontends (prefixed by ft_) and backends (prefixed by bk_). Each farm is composed by 2 servers.

Configuration

Nginx and Naxsi

Configure nginx as a reverse-proxy which listen in bk_waf and forward traffic to ft_web. In the mean time, naxsi is there to analyze the requests.

server {

proxy_set_header Proxy-Connection "";

listen 192.168.10.15:81;

access_log /var/log/nginx/naxsi_access.log;

error_log /var/log/nginx/naxsi_error.log debug;

location / {

include /etc/nginx/test.rules;

proxy_pass http://192.168.10.2:81/;

}

error_page 403 /403.html;

location = /403.html {

root /opt/nginx/html;

internal;

}

location /RequestDenied {

return 403;

}

}HAProxy load balancer configuration

The configuration below allows the following advanced features:

DDOS protection on the frontend

abuser or attacker detection in bk_waf and blocking on the public interface (ft_waf)

Bypassing WAF when overusage or unavailable

######## Default values for all entries till next defaults section

defaults

option http-server-close

option dontlognull

option redispatch

option contstats

retries 3

timeout connect 5s

timeout http-keep-alive 1s

# Slowloris protection

timeout http-request 15s

timeout queue 30s

timeout tarpit 1m # tarpit hold tim

backlog 10000

# public frontend where users get connected to

frontend ft_waf

bind 192.168.10.2:80 name http

mode http

log global

option httplog

timeout client 25s

maxconn 10000

# DDOS protection

# Use General Purpose Couter (gpc) 0 in SC1 as a global abuse counter

# Monitors the number of request sent by an IP over a period of 10 seconds

stick-table type ip size 1m expire 1m store gpc0,http_req_rate(10s),http_err_rate(10s)

tcp-request connection track-sc1 src

tcp-request connection reject if { sc1_get_gpc0 gt 0 }

# Abuser means more than 100reqs/10s

acl abuse sc1_http_req_rate(ft_web) ge 100

acl flag_abuser sc1_inc_gpc0(ft_web)

tcp-request content reject if abuse flag_abuser

acl static path_beg /static/ /dokuwiki/images/

acl no_waf nbsrv(bk_waf) eq 0

acl waf_max_capacity queue(bk_waf) ge 1

# bypass WAF farm if no WAF available

use_backend bk_web if no_waf

# bypass WAF farm if it reaches its capacity

use_backend bk_web if static waf_max_capacity

default_backend bk_waf

# WAF farm where users' traffic is routed first

backend bk_waf

balance roundrobin

mode http

log global

option httplog

option forwardfor header X-Client-IP

option httpchk HEAD /waf_health_check HTTP/1.0

# If the source IP generated 10 or more http request over the defined period,

# flag the IP as abuser on the frontend

acl abuse sc1_http_err_rate(ft_waf) ge 10

acl flag_abuser sc1_inc_gpc0(ft_waf)

tcp-request content reject if abuse flag_abuser

# Specific WAF checking: a DENY means everything is OK

http-check expect status 403

timeout server 25s

default-server inter 3s rise 2 fall 3

server waf1 192.168.10.15:81 maxconn 100 weight 10 check

server waf2 192.168.10.16:81 maxconn 100 weight 10 check

# Traffic secured by the WAF arrives here

frontend ft_web

bind 192.168.10.2:81 name http

mode http

log global

option httplog

timeout client 25s

maxconn 1000

# route health check requests to a specific backend to avoid graph pollution in ALOHA GUI

use_backend bk_waf_health_check if { path /waf_health_check }

default_backend bk_web

# application server farm

backend bk_web

balance roundrobin

mode http

log global

option httplog

option forwardfor

cookie SERVERID insert indirect nocache

default-server inter 3s rise 2 fall 3

option httpchk HEAD /

# get connected on the application server using the user ip

# provided in the X-Client-IP header setup by ft_waf frontend

source 0.0.0.0 usesrc hdr_ip(X-Client-IP)

timeout server 25s

server server1 192.168.10.11:80 maxconn 100 weight 10 cookie server1 check

server server2 192.168.10.12:80 maxconn 100 weight 10 cookie server2 check

# backend dedicated to WAF checking (to avoid graph pollution)

backend bk_waf_health_check

balance roundrobin

mode http

log global

option httplog

option forwardfor

default-server inter 3s rise 2 fall 3

timeout server 25s

server server1 192.168.10.11:80 maxconn 100 weight 10 check

server server2 192.168.10.12:80 maxconn 100 weight 10 checkDetecting attacks

On the load balancer

The ft_waf frontend stick table tracks two information: http_req_rate and http_err_rate which are respectively the http request rate and the http error rate generated by a single IP address.

HAProxy would automatically block an IP which has generated more than 100 requests over a period of 10s or 10 errors (WAF detection 403 responses included) in 10s. The user is blocked for 1 minute as long as he keeps on abusing.

Of course, you can setup above values to whatever you need: it is fully flexible.

To know the status of IPs in your load balancer, just run the command below:

echo show table ft_waf | socat /var/run/haproxy.stat -

# table: ft_waf, type: ip, size:1048576, used:1

0xc33304: key=192.168.10.254 use=0 exp=4555 gpc0=0 http_req_rate(10000)=1 http_err_rate(10000)=1The ALOHA load balancer does not provide a watch tool, but you can monitor the content of the table live with the command below:

while true ; do echo show table ft_waf | socat /var/run/haproxy.stat - ; sleep 2 ; clear ; doneOn the WAF

Every Naxsi error log appears in /var/log/nginx/naxsi_error.log. IE:

2012/10/16 13:40:13 [error] 10556#0: *10293 NAXSI_FMT: ip=192.168.10.254&server=192.168.10.15&uri=/testphp.vulnweb.com/artists.php&total_processed=3195&total_blocked=2&zone0=ARGS&id0=1000&var_name0=artist, client: 192.168.10.254, server: , request: "GET /testphp.vulnweb.com/artists.php?artist=0+div+1+union%23foo*%2F*bar%0D%0Aselect%23foo%0D%0A1%2C2%2Ccurrent_user HTTP/1.1", host: "192.168.10.15:81"The Naxsi log line is less obvious than the ModSecurity one. The rule which matched os provided by the argument idX=abcde.

No false positive during the test, I had to build a request to make Naxsi match it 🙂 .

Conclusion

Today, we saw it’s easy to build a scalable and performing WAF platform in front of any web application.

The WAF is able to communicate to HAProxy which IPs to automatically blacklist (throuth error rate monitoring), which is convenient since the attacker won’t bother the WAF for a certain amount of time 😉

The platform allows to detect WAF farm availability and to bypass it in case of total failure, we even saw it is possible to bypass the WAF for static content if the farm is running out of capacity. Purpose is to deliver a good end-user experience without dropping too much the security. Note that it is possible to route all the static content to the web servers (or a static farm) directly, whatever the status of the WAF farm.

This make me say that the platform is fully scallable and flexible.

Thanks to HAProxy, the architecture is very flexible: I could switch my Apache + ModSecurity to nginx + naxsi with no issues at all 🙂 This could be done as well for any third party WAF appliances.

Note that I did not try any naxsi advanced features like learning mode and the UI as well.