At HAProxy Technologies, we edit and sell a load balancer appliance called ALOHA which stands for Application Layer Optimization and High Availability. A few months ago, we managed to make it run on the most common hypervisors available:

VMWare (ESX, vSphere)

Citrix XenServer

HyperV

Xen OpenSource

KVM

Since a load balancer appliance is network IO intensive, we thought it was a good opportunity to bench each hypervisor from a virtual network performance point of view. More and more companies use virtualization in their infrastructures, so we guessed that a lot of people would be interested in the results of this bench and that’s why we decided to publish them on our blog.

Things to Bear in Mind About Virtualization

One interesting feature of virtualization is the ability to consolidate several servers onto a single piece of hardware. As a consequence, the resources (CPU, memory, disk, and network IOs) are shared between several virtual machines.

Another issue to take into account is that the hypervisor is like a new “layer” between the hardware and the OS inside the VM, which means that it may have an impact on the performance.

Benchmarking Hypervisors: Purpose

Our position is one of complete neutrality - we don't take sides when it comes to hypervisors. It's not our intention to express any positive or negative opinions about any particular hypervisor, so you can expect unbiased information from us.

Our main goal here is to check if each hypervisor performs well enough to allow us to run our virtual appliance on top of it. From the tests we’ll run, we want to be able to measure the impact of each hypervisor on virtual machine performance.

Benchmarking Platform & Procedure

To run these tests, we use the same server for all hypervisors and just swap the hard drive to run each hypervisor independently.

Hypervisor hardware specifications:

CPU quad-core i7 @3.4GHz

16G of memory

Network card 1G copper e1000e

After conducting benchmark tests on several network cards, we obtained unfavorable results in regard to their performance.

There is a single VM running on the hypervisor: The Aloha load balancer.

The Aloha Virtual Appliance we used is Aloha VA 4.2.5, with 1 GB of memory and 2 vCPUs. The client and WWW servers are physical machines plugged into the same LAN as the hypervisor. The client tool is injected, and the web server behind the Aloha VA is httpterm. So basically, the only thing that will change during these tests is the hypervisor.

The Aloha is configured in reverse-proxy mode (using HAProxy) between the client and the server, load balancing and analyzing HTTP requests. We focused mainly on virtual networking performance: the number of HTTP connections per second and associated bandwidth.

We ran the benchmark with different object sizes: 0, 1K, 2K, 4K, 8K, 16K, 32K, 48K, and 64K. By “HTTP connection," we mean a single HTTP request with its response over a single TCP connection, like in HTTP/1.0.

Basically, the 0K object test is used to get the number of connections per second the VA can do, and the 64K object is used to measure the maximum bandwidth.

The maximum bandwidth will be 1G anyway since we’re limited by the physical NIC.

We are going to benchmark Network IO only since this is the intensive usage a load balancer does. We won’t benchmark disk IOs…

Tested Hypervisors

We benchmarked a native Aloha against Aloha VA embedded in the Hypervisors listed below:

HyperV

RHEV (KVM based)

vshpere 5.0

Xen 4.1 on Ubuntu 11.10

XenServer 6.0

Benchmarking Results

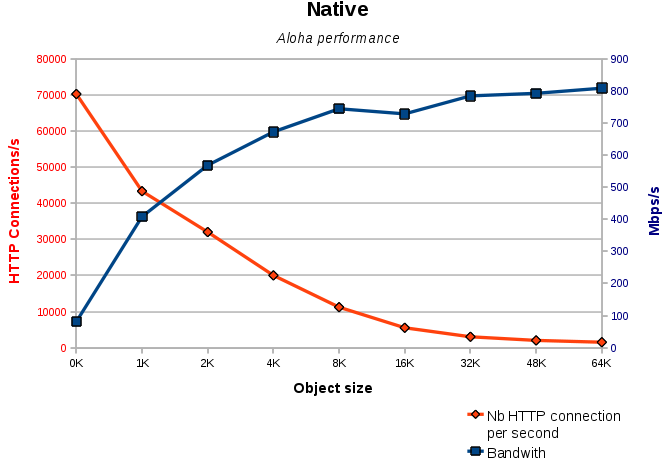

Raw server performance (native tests, without any hypervisor)

For the first test, we ran Aloha on the server itself without any hypervisor. That way, we’ll have some figures on the capacity of the server itself. We’ll use those numbers later in the article to compare the impact of each hypervisor on performance.

#1 Microsoft HyperV

We tested HyperV on a Windows 2008 R2 server. For this hypervisor, two network cards are available:

Legacy network adapter: which emulates the network layer through the tulip driver.

With this driver, we got around 1.5K requests per second, which is suboptimal.Network adapter: requires the hv_netvsc driver supplied by Microsoft in open source since Linux Kernel 2.6.32. This is the driver we used for the test

.png)

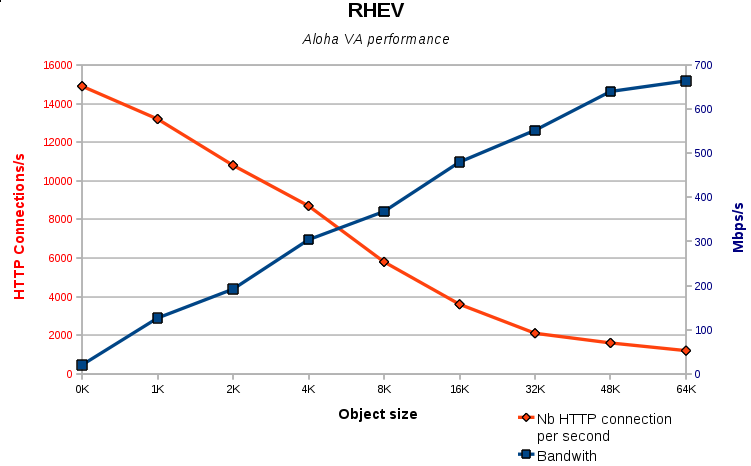

#2 RHEV 3.0 Beta (KVM based)

RHEV is Red Hat Hypervisor, based on KVM. For the virtualization of the network layer, RHEV uses the virtio drivers. Note that RHEV was still in the beta version when we ran this test.

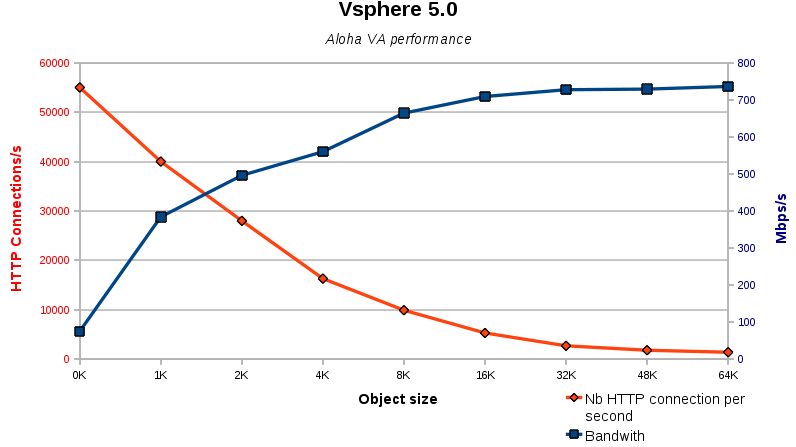

#3 VMWare VSphere 5

There are 3 types of network cards available for VSphere 5.0:

1. Intel e1000: e1000 driver emulates the network layer into the VM

2. VMxNET 2: allows network layer virtualization

3. VMxNET 3: allows network layer virtualization

The best results were obtained with the vmxnet2 driver.

We have not tested VSphere 4 either ESX 3.5.

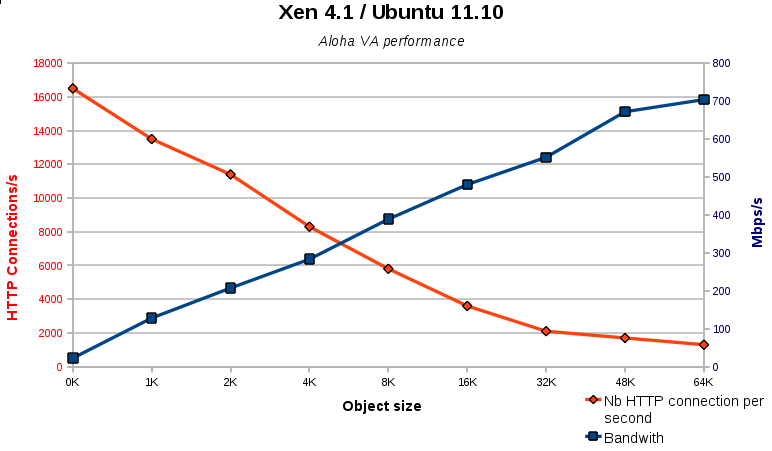

#4 Xen OpenSource 4.1 on Ubuntu 11.10

Since CentOS 6.0 does not provide Xen OpenSource in its official repositories, we decided to use the latest Oneiric Ocelot Ubuntu server distribution with Xen 4.1 on top of it.

Xen provides two network interfaces:

emulated one, based on 8139too driver

the virtualized network layer, xen-vnif

Of course, the results are much better with xen-vnif, so we’re going to use it for the test.

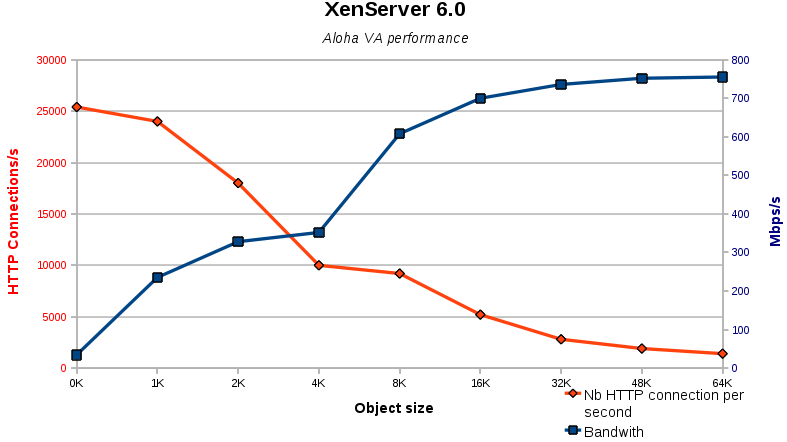

#5 Citrix XenServer 6.0

The network driver used for XenServer is the same one as the one used Xen OpenSource version: xen-vnif.

Hypervisors Comparison

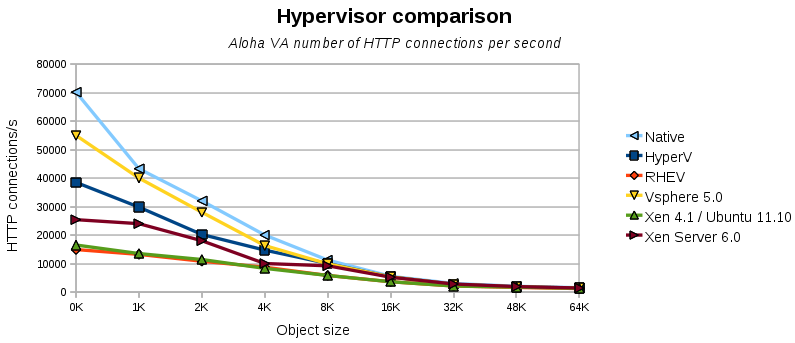

1. HTTP connections per second

The graph below summarizes the HTTP connections per second capacity for each hypervisor. It shows us the hypervisor overhead by comparing the light blue line, which represents the server capacity without any hypervisor, to each hypervisor line.

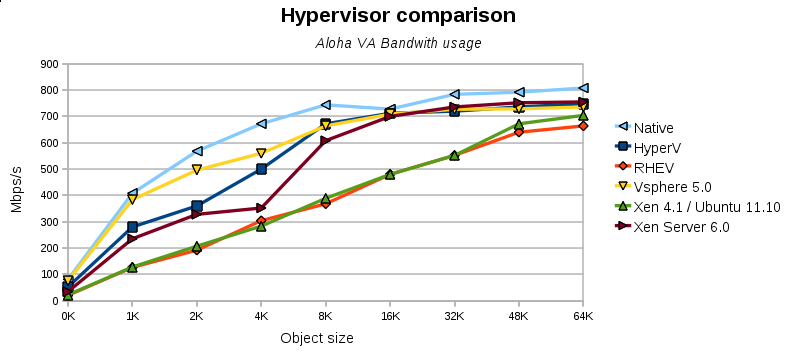

2. Bandwidth usage

The graph below summarizes the HTTP connections per second capacity for each hypervisor. It shows us the hypervisor overhead by comparing the light blue line, which represents the server capacity without any hypervisor, to each hypervisor line.

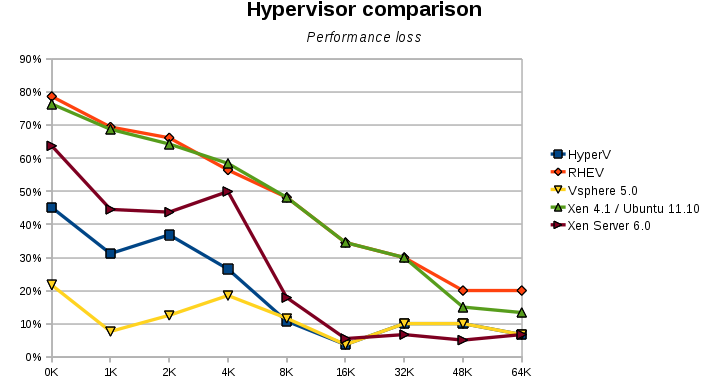

3. Performance loss

Well, comparing hypervisors to each other is nice, but remember, we wanted to know how much performance was lost in the hypervisor layer. The graph below shows, in percentage terms, the loss generated by each hypervisor when compared to the native Aloha. The higher the percentage, the worse it is for the hypervisor.

Final Verdict: The Winner Is...

The hypervisor layer has a non-negligible impact on networking performance on a virtualized load balancer running in reverse-proxy mode. But the same would be expected for any VM which is Networking IO intensive.

The shorter the connections, the bigger the impact. For very long connections like TSE, IMAP, etc., virtualization might make sense.

VSphere seems advanced compared to its competitors from a performance point of view. HyperV and Citrix XenServer have interesting performances. RHEV (KVM) and Xen open source can still improve performance.

Even if the hardware layer is not accessed by the VM anymore, it still has a huge impact on performance.

For example, on vSphere, we could not go higher than 20K connections per second with a Realtek NIC in the server. With the Intel e1000e driver, we got up to 55K connections per second. So even when you use virtualization, hardware counts.